Linux Performance Monitoring and Optimization: Complete Guide

🎯 Key Takeaways

- Why Performance Monitoring Matters

- Understanding Linux Performance Metrics

- Essential Performance Monitoring Tools

- Memory Performance Analysis

- Disk I/O Performance Monitoring

📑 Table of Contents

- Why Performance Monitoring Matters

- Understanding Linux Performance Metrics

- Essential Performance Monitoring Tools

- Memory Performance Analysis

- Disk I/O Performance Monitoring

- Network Performance Analysis

- System-Wide Performance Monitoring

- Performance Optimization Strategies

- Network Optimization Techniques

- Kernel Parameter Tuning

- Benchmarking and Load Testing

- Troubleshooting Performance Issues

- Best Practices for Performance Management

📑 Table of Contents

- Why Performance Monitoring Matters

- Understanding Linux Performance Metrics

- Essential Performance Monitoring Tools

- Advanced Process Monitoring

- Memory Performance Analysis

- Disk I/O Performance Monitoring

- Network Performance Analysis

- Network Latency and Packet Loss

- System-Wide Performance Monitoring

- Performance Optimization Strategies

- Disk Performance Tuning

- Network Optimization Techniques

- Kernel Parameter Tuning

- Benchmarking and Load Testing

- Troubleshooting Performance Issues

- Best Practices for Performance Management

Why Performance Monitoring Matters

System performance can make or break your applications, user experience, and business operations. Whether you’re managing a single server or an entire infrastructure, understanding how to monitor and optimize Linux performance is a critical skill. Poor performance leads to frustrated users, wasted resources, and potentially significant financial losses.

Modern Linux systems are complex ecosystems where CPU, memory, disk I/O, and network resources must work in harmony. Performance issues rarely announce themselves clearly—they manifest as slow response times, application crashes, or mysterious system behavior. Learning to identify, diagnose, and resolve these issues separates competent administrators from exceptional ones.

Understanding Linux Performance Metrics

Before diving into tools and techniques, you need to understand what metrics matter. CPU utilization measures how much processing power is being consumed. High CPU usage isn’t always bad—it means your system is doing work. However, consistently maxed-out CPU indicates bottlenecks that need addressing.

Memory usage requires nuanced interpretation. Linux aggressively caches data in RAM, so seeing high memory usage is normal and beneficial. What matters is swap usage—when your system starts swapping to disk, performance degrades dramatically. Understanding the difference between buffers, cache, and actual application memory usage prevents misdiagnosis.

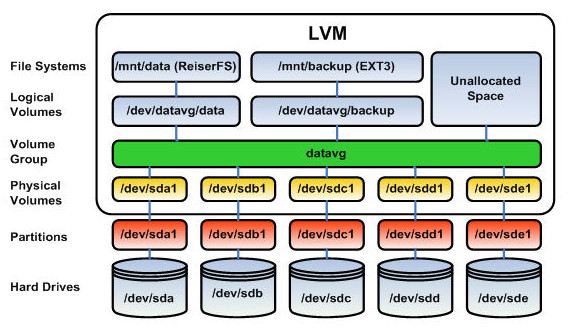

Disk I/O performance affects everything from database queries to application loading times. Metrics like IOPS (Input/Output Operations Per Second), throughput, and latency tell different parts of the storage performance story. Network performance involves bandwidth utilization, packet loss, latency, and connection statistics—all crucial for modern distributed applications.

Essential Performance Monitoring Tools

The top command is every Linux administrator’s starting point. This real-time process monitor shows CPU usage, memory consumption, and active processes. While basic, top provides immediate insight into what’s happening on your system. Understanding load averages—those three numbers at the top—helps you gauge system health over one, five, and fifteen-minute intervals.

Htop improves upon top with a more intuitive, colorful interface. It provides better process management, easier navigation, and clearer resource visualization. The ability to scroll through processes, sort by various metrics, and send signals to processes makes htop indispensable for daily administration tasks.

Advanced Process Monitoring

The ps command offers extensive process information beyond what interactive tools provide. Combined with grep and other utilities, ps becomes powerful for scripting and automation. You can see every process detail—from command-line arguments to resource consumption to parent-child relationships.

For detailed CPU analysis, mpstat breaks down processor statistics per CPU core. On multi-core systems, this granularity reveals whether workloads are properly distributed or if certain cores are bottlenecked. Understanding user time versus system time helps identify whether applications or the kernel are consuming cycles.

Memory Performance Analysis

The free command provides a quick memory overview, but vmstat offers deeper insights. Vmstat shows virtual memory statistics including swap usage, memory swapping rates, and system activity. If you see significant values in the ‘si’ (swap in) and ‘so’ (swap out) columns, your system is thrashing—desperately swapping data between RAM and disk.

The /proc/meminfo file contains comprehensive memory information. Reading this file directly reveals details about active versus inactive memory, slab allocations, and kernel memory usage. These advanced metrics help diagnose memory leaks and understand exactly how your system allocates RAM.

Memory pressure can manifest in subtle ways. Applications slowing down, increased disk activity, and longer response times often indicate memory constraints. Using tools like smem or looking at per-process memory consumption helps identify memory-hungry applications before they cause system-wide problems.

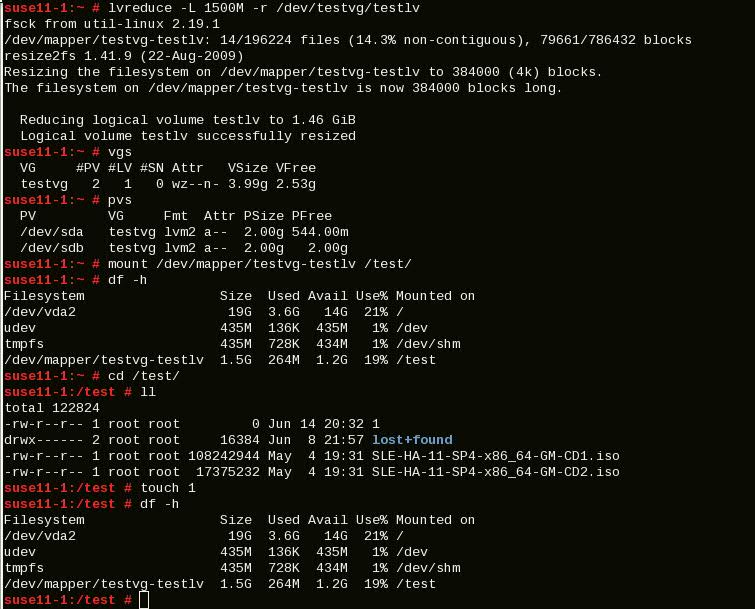

Disk I/O Performance Monitoring

Iostat reveals disk I/O patterns and bottlenecks. This tool shows read/write operations per second, throughput in KB/s, and most importantly, await time—how long I/O requests wait in queue. High await times indicate disk subsystem problems that will impact application performance.

The iotop command functions like top but for disk I/O. It shows which processes are generating disk activity, helping you identify runaway processes or applications with poor I/O patterns. During performance troubleshooting, iotop quickly reveals whether disk activity is causing slowdowns.

Modern storage systems use various schedulers and caching mechanisms. Understanding your I/O scheduler (CFQ, deadline, noop, or newer ones like BFQ or kyber) affects performance significantly. SSDs and traditional spinning disks require different optimization approaches—what works for one can harm the other’s performance.

Network Performance Analysis

Network bottlenecks are often overlooked but can severely impact distributed applications. The netstat command shows active connections, listening ports, and routing tables. While deprecated in favor of ss on modern systems, understanding both tools provides flexibility across different Linux distributions.

The ss command provides faster, more detailed socket statistics than netstat. It shows TCP connection states, buffer utilization, and detailed protocol information. When diagnosing network issues, ss reveals whether connections are properly established or stuck in unusual states.

Bandwidth monitoring with tools like iftop, nload, or vnstat shows real-time and historical network usage. These tools help identify bandwidth saturation, unusual traffic patterns, or specific hosts consuming excessive bandwidth. Understanding normal traffic patterns makes anomalies obvious.

Network Latency and Packet Loss

Ping and mtr (my traceroute) diagnose connectivity and latency issues. While ping provides basic reachability testing, mtr combines ping and traceroute functionality, showing where latency or packet loss occurs along the route to a destination. This granularity is invaluable for diagnosing network problems beyond your direct control.

The tcpdump and Wireshark tools capture and analyze network packets. When applications behave mysteriously, examining actual network traffic often reveals protocol issues, misconfigured services, or application bugs. Understanding packet-level details separates surface-level troubleshooting from root cause analysis.

System-Wide Performance Monitoring

SAR (System Activity Reporter) collects and reports comprehensive system statistics over time. Unlike real-time tools, sar maintains historical data, enabling trend analysis and capacity planning. You can review yesterday’s CPU usage, last week’s memory consumption, or month-long I/O patterns.

Configuring sar through sysstat provides automatic data collection at regular intervals. This historical perspective is crucial for identifying patterns—perhaps performance degrades every Tuesday at 2 AM during backup operations, or memory slowly leaks over weeks. Real-time tools miss these temporal patterns.

Modern monitoring solutions like Prometheus, Grafana, and Netdata provide sophisticated dashboards and alerting. While these require more setup than command-line tools, they offer powerful visualization, historical analysis, and proactive alerting before users notice problems.

Performance Optimization Strategies

Once you’ve identified bottlenecks, optimization begins. CPU optimization starts with identifying processes consuming excessive cycles. Sometimes applications can be tuned, configurations adjusted, or processes scheduled differently. Process priority adjustment with nice and renice influences scheduling, ensuring critical applications get resources first.

Memory optimization involves understanding application behavior. Memory leaks require code fixes, but you can mitigate impact through process restarts or resource limits. Adjusting swappiness—the kernel’s tendency to swap—balances RAM usage and swap utilization. For most workloads, reducing swappiness improves performance.

Disk Performance Tuning

Filesystem choice significantly impacts performance. Ext4 remains reliable and well-tested, XFS excels with large files and parallel I/O, and Btrfs offers advanced features with some performance overhead. Choosing the right filesystem for your workload prevents future bottlenecks.

Mount options affect performance considerably. Options like noatime prevent constant metadata updates on file access, improving performance especially on systems with heavy read activity. Properly sizing filesystem buffers and cache can dramatically reduce I/O wait times.

For databases and high-I/O applications, separating data across multiple disks or using RAID improves throughput and reduces latency. Understanding RAID levels and their performance characteristics ensures you choose appropriate configurations for your needs.

Network Optimization Techniques

Network performance tuning involves several kernel parameters. TCP buffer sizes affect throughput, especially on high-latency or high-bandwidth connections. The congestion control algorithm influences how TCP responds to network conditions—BBR (Bottleneck Bandwidth and RTT) often outperforms traditional algorithms.

Interrupt coalescing and receive-side scaling distribute network processing across CPU cores. On high-throughput systems, these settings prevent a single CPU core from bottlenecking network performance. Modern NICs support various offload features that reduce CPU overhead.

Quality of Service (QoS) configurations prioritize important traffic during congestion. Rate limiting prevents individual applications or users from consuming all available bandwidth. These techniques ensure consistent performance even during peak usage periods.

Kernel Parameter Tuning

Sysctl provides access to kernel parameters controlling system behavior. Parameters are organized hierarchically under /proc/sys/ and can be modified temporarily or permanently through /etc/sysctl.conf. Understanding which parameters affect performance requires careful research and testing.

Common tuning targets include virtual memory behavior, network stack parameters, and filesystem caching. The vm.swappiness parameter controls swap aggressiveness, net.core buffers affect network performance, and fs.file-max sets system-wide file descriptor limits. Changes should be made incrementally with proper testing.

Kernel tuning requires caution—inappropriate settings can destabilize systems or actually harm performance. Always document changes, test thoroughly, and maintain the ability to revert. Performance tuning is iterative; one change affects others, requiring continuous measurement and adjustment.

Benchmarking and Load Testing

Before and after optimization, benchmarking provides objective performance measurements. Tools like sysbench test CPU, memory, and disk performance with standardized workloads. Comparing results before and after changes validates whether optimizations actually helped.

Application-specific load testing reveals real-world performance. Tools like Apache Bench (ab), siege, or JMeter simulate user load, exposing bottlenecks that won’t appear in synthetic benchmarks. Testing with realistic workloads prevents optimizing for the wrong scenarios.

Continuous performance monitoring catches regressions early. After optimization, ongoing measurement ensures performance remains stable. Automated alerting on performance degradation enables proactive response before users complain.

Troubleshooting Performance Issues

Effective troubleshooting follows systematic methodology. Start by defining the problem clearly—”slow” is vague; “database queries taking over 5 seconds when they previously took under 1 second” is actionable. Establish baseline performance to understand what “normal” looks like.

Use the scientific method: form hypotheses about causes, test them systematically, and measure results. Tools like strace reveal system call patterns, helping identify whether performance issues stem from excessive I/O, network delays, or computation. Profiling tools show exactly where applications spend time.

Performance issues often have multiple contributing factors. Fixing CPU bottlenecks might expose disk I/O problems. Memory pressure can manifest as CPU load when the system constantly swaps. Holistic analysis prevents playing whack-a-mole with symptoms while ignoring root causes.

Best Practices for Performance Management

Establish performance baselines during normal operation. Understanding typical CPU usage, memory consumption, and I/O patterns makes anomalies obvious. Document your system’s normal behavior across different times and conditions.

Implement monitoring before problems occur. Reactive troubleshooting is stressful; proactive monitoring provides early warnings. Set up alerts for concerning thresholds—high CPU usage, memory exhaustion, or disk space shortage—allowing intervention before outages.

Regular capacity planning prevents performance crises. Analyze trends to predict when resources will become constrained. Adding capacity before exhaustion is cheaper and less stressful than emergency scaling during outages.

Was this article helpful?

About Ramesh Sundararamaiah

Red Hat Certified Architect

Expert in Linux system administration, DevOps automation, and cloud infrastructure. Specializing in Red Hat Enterprise Linux, CentOS, Ubuntu, Docker, Ansible, and enterprise IT solutions.